So that kind of means that the high-end AAA PC market will crash in the next years, right? No new GPUs, production stop for existing GPUs and rising prices for GPU & RAM in combination with inflation and a bad economy ensure that many people can’t afford a gaming computer. And that a lot of those younger gamers can’t afford to start this hobby.

And that means a shrinking audience for games, which need all this GPU power. If you’re an AAA publisher, it kind of looks crazy to invest multiple millions into a game that you can’t be sure that your audience will be able to afford to play

Definitely a shrinking audience for AAA games, but I don’t think it will be too bad gamers overall. Consoles will keep marching forward, as will Valve with the Steam Deck and Steam Machine.

I think the highest of the high end graphics stuff has long since hit diminishing returns. You can do a hell of a lot with yesterday’s hardware and less-than-bleeding-edge process nodes for newer hardware. Consoles have never used bleeding edge GPUs and they’ve always done fine with sales (across the whole market, if not always individually). I think we’re highly unlikely to see a repeat of the 1983 gaming crash.

They do fine in sales because the consoles sold at loss and they make money on game sales.

Microsoft, Sony and Nintendo would love to stop producing consoles and start selling you monthly service via a thin client. They just need a ready to go platform for it to gain enough mass first.

Nintendo has never sold their consoles at a loss. They sell them at a small profit which then grows to a larger profit as the cost of making them decreases.

No not really. AMD is still producing cards. Most people play on older or used cards anyways. Maybe like don’t make Crysis level Graphics but other than that one year of less GPU releases won’t kill gaming. Once the AI bubble bursts NVDIA might have lost a lot of edge over AMD in the gaming market and they’ll scramble to get back

“dont you have phones?”

I think it’ll have the opposite effect. Knowing the hardware won’t change in the next year, they don’t have to worry about making it compatible with the new cards. They can focus on building upon what they already have.

And as someone that helped pick out a fantastic PC for my little cousin in dec last year, she paid ~500$ for pretty decent hardware, and so far, she hasn’t found a game in my library her PC can’t handle. Including “wh40k Space Marine 2”

There’s plenty of hardware for younger people that want to get into the hobby. You don’t always need the absolute latest.

Someone is going to make bank by catering to consumers. Will the market accept nvidia back with open arms if/when the ai investments fall through?

Well what do most victims of exploitation and abuse do?

Visiting Stockholm?

I hear it’s nice

Most people are willing to sell their morals. When nvidia comes crawling back it will be like nothing ever happened.

As a Linux gamer, nvidia was already on thin ice.

Also I had past them up on recentish purchases since they only really controlled the highest end of the market which I don’t have the budget for. So honestly I have no intention of welcoming them back unless there is literally no other option. You made your bed.

That would be nice. But video cards are a VERY niche piece of engineering. The knowledge of HOW to make them is locked in a handful of people, and the ability to make them locked behind a very niche set of equipment that will ALSO be exploding in cost.

One does not simply start a graphics card company.

I don’t think a newcomer could do it, but a company like Intel is posed to be in a good position. They don’t have much market share but they have a good product.

The problem with that is Intel is subject to the same bullshit economic assessments as AMD and Nvidia… They’ll just as soon retool for ai as well.

Intel is arguably worse. They‘re in a bad spot right now so they can‘t do crazy things like Nvidia but they totally would and will go down the same path. I don‘t think US designed hardware will ever truly come back to end consumer products.

Nothing will. They’re moving us onto techno feudalism. We won’t earn anything and we’ll be wage slaves if they’re merciful to us, otherwise most people will be in camps and dead, unless we stand up real damn soon .

They’re actively moving away from the bottom 90% of consumers, it’s just not worth it anymore and maybe once it was worth advertising to us, but no longer. The top 10% owns 93% of stocks and control at least 55% of the market revenue as of early 2025, probably closer to 60-65% now, after tariffs, layoffs, and the nonexistent recession We’re all imagining and definitely isn’t real. /s

Intel, here’s your big chance!

Intel is partly owned by the US government now. You think they want tech going to the people when they themselves want them for skynet.

Stop buying Nvidia

Easy enough when they’re not selling

Well, they are helping out with that one…

I wish there were more laptops using AMD gpus here in Brazil. You basically can’t find any laptop with an AMD gpu if you search for “gamer laptop” in Brazilian stores.

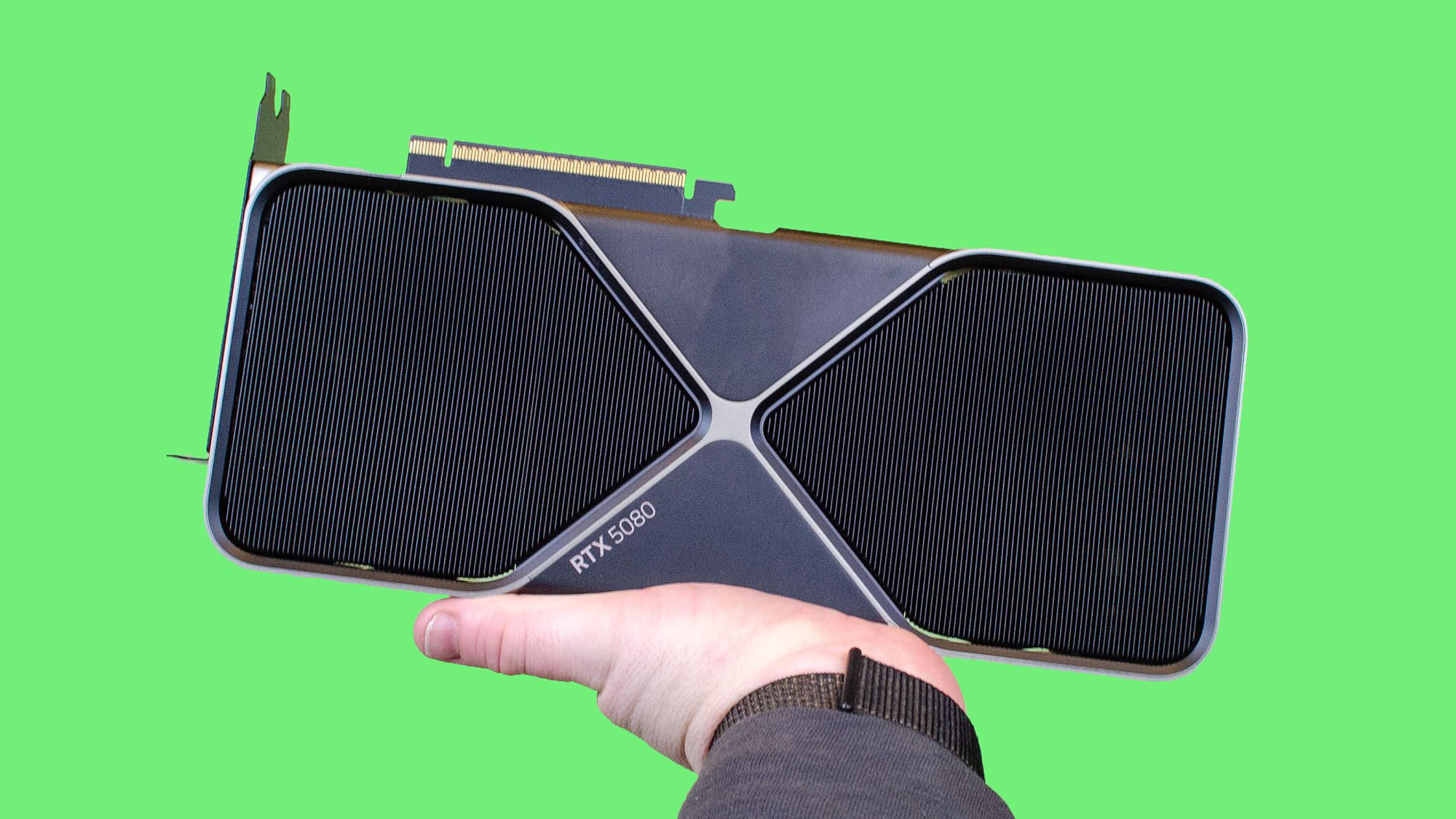

I will when someone makes a GPU that can surpass a 4090. Not even Nvidia themselves can pull that off, so I’m not getting my hopes up.

I’m going to be stuck with this GPU for the next decade the way things are going…not that I’m complaining. It’s a beast of a card, especially for someone like me who could only ever afford bargain bin parts until one day I came into a windfall. (That was a fun 4 years.) I don’t have to worry about games being unoptimized because I can simply brute force them with pure GPU processing power. I was getting 90 FPS in Last of Us on launch. Even Cities Skylines 2 runs smoothly.

Youre not actively buying from them by using what you have. nobody buys a downgrade.

The time to prevent Nvidia from practically gaining a total monopoly on the entire market by stopping buying Nvidia, was 10 years ago, not now.

Now, I’ll consider buying a GPU from you instead if you can make a GPU that satisfies technical needs like Nvidia could, but you cannot.

AMDs the last 10 years have been great. No overheating, like they did 20 years ago.

While AMD is no angel, I’m glad I went for Radeon RX 9070 XT this time. Really good GPU and fuck NVIDIA. I hope unified RDNA5 will work out for AMD.

I have gone all AMD graphics since converting to Linux. My 9060XT 16GB and 6600 8GB both are going strong.

Fuck NVidia.

I went with Intel ARC since I don’t actually need GPU processing power so much as a decent media engine and VRAM for future projects and Intel has that ready to go under Linux. In the CPU side AMD is the only option that makes sense and for gaming AMDs GPUs have already been the practical option for years but their media engines are trash.

But we don’t need NVIDIA and we don’t even need high end GPUs as much as we think we do.

Let Nvidia go bankrupt, we won’t miss it

i really hope nvidia collapses when the AI bubble pops. They’ve been more harm than good for consumers for too long.

What could a GPU cost? $5000?

Hey, I’ve seen this one before.

Last time it was crypto instead of AI, but other than that it’s just the same shit again.

As someone not looking to spend a ton of money on new hardware any time soon: good. The longer it takes to release faster hardware, the longer current hardware stays viable. Games aren’t going to get more fun by slightly improving graphics anyway. The tech we have now is good enough.

People don’t just use computers for gaming. If this continues people will struggle to do any meaningful work on their personal computes which is definitely not good. And I’m not talking about browsing facebook but about coding, doing research, editing videos and other useful shit.

But wait! They can pay for remote computing time for a fraction of the cost! Each month. Forever.

I fully expect personal computers to be phased out in favor of a remote-access, subscription model. AI popping would leave these big data centers with massive computational power available for use, plus it’s the easiest way to track literally everything you do on your system.

easiest way to track literally everything you do on your system.

And ban undesired activities. “We see you’re building app to track ICE agents. That’s illegal. Your account was banned and all your data removed.”.

“Remain in your cube - The Freedom Force is en route to administer freedom reeducation. Please be sure to provide proof of medical insurance prior to forced compliance.”

This is true but at the current computer prices, nowhere near as bad as it sounds. I spend £100/year or thereabouts for GeForce Now, and

- there’s no way I could play games on a £500 laptop that I renew every 5 years,

- no way that a £1000 laptop could get me to play AAA games for more than 1-2 years

- and sure, I could play games on a £2000 laptop, but no way that will last me 20 years.

If you have a life and can’t play any more than 25 hours a week, the value proposition right now is great - there’s no viable alternative that allows you to keep playing AAA games for the equivalent of £100/year.

Fuck, you almost sold me on GeForce Now. Owning is still a better value proposition for me because I get my games at… steep discounts.

I fully expect personal computers to be phased out in favor of a remote-access, subscription model

I wouldn’t hold my breath.

You can write code just fine on 20 or even 30 year old hardware. Basically if it runs Linux, chances are it can also run vim and compile code. If you spring for 10-15 year old hardware, you can even get an LSP + coc or helix, for error highlighting and goto definition and code actions. And you definitely don’t need a beefy GPU for it (unless you’re doing something GPU-specific of course).

Editing 720p videos (which, if you encode with a high enough bitrate, still looks alright) can be done on 10-15 year old hardware.

Research is where it gets complicated. It does indeed often require a lot of computing power to do modern computational research. But for some simpler stuff - especially outside STEM - you can sometimes get away with a LibreOffice spreadsheet on an old Dell or something.

From the looks of it we will have to get used to doing more with less when it comes to computers. And TBH I’m all for it. I just hope that either my job won’t require compiling a lot more stuff, or they provide me with a modern machine at their expense.

Dude, I’m coding every day and I know what hardware requirements I have. You can write some code slowly on a potato but a lot of software development requires tons of RAM and powerful CPU. Linus Torvalds is using Threadripper 9960X for a reason.

It’s nicer to develop anything on a beefy machine, I was rocking a 7950X until recently. The compile times are a huge boon, and for some modern bloated bullshit (looking at you, Android) you definitely need a beefy machine to build it in a realistic timeframe.

However, we can totally solve a lot of real-world problems with old cheap crappy hardware, we just never wanted to because it was “cheaper” for some poor soul in China to build a new PC every year than for a developer to spend an extra week thinking about efficiency. That appears to be changing now, especially if your code will be running on consumer hardware.

My dad used to “write” software for basic aerodynamic modelling on punchcards, on a mainframe that has about us much computing power as some modern microcontrollers. You wouldn’t even consider it a potato by today’s standards. I’m sure if we use our wit and combine it with arcane knowledge of efficient algorithms, we can optimize our stacks to compile code on a friggin 3.5GHz 10-core CPU (which are 10 year old now).

I’m sure some people would still be able to code just fine on crap hardware but it’s silly to think Open Source will not suffer if access to good hardware is limited.

I can code my stuff ok on an older model. I’m sure there are some stacks that need more resources, but I’m having a hard time thinking of which.

Admittedly, on a laptop that is 20+ years old, I cannot surf the web AND run docker at the same time

Just running rust-analyzer for not-so-big project requires 5GB of RAM. Inspecting libraries will start another process and I sometimes have 2 projects open at the same time. You can work without it, using simple text editor but DX will way worse and unless you’re some Rust guru you will work slower. Compilation time will be way worse on older CPU so you will iterate slower. That’s why Linus is using a Threadripper.

Running integration tests for Java project I’m working on maxes out my 16 core CPU. My co-workers with older laptops struggled to set up the development environment and build the project because they were constantly running out of RAM.

Yes, we were all writing code 20 years ago in vim with just syntax highlighting but new stacks and new tools require new hardware.

I’m not running fucking vim for software development

Honestly it’s fine. LSPs are nice but you don’t need them per se. A combination of vim, tmux, entr, a fast incremental compiler, grep, and proper documentation can get you a long way there.

A lot of critically important code that’s running the servers we’re using to communicate was written this way. And, if capitalist decline continues long enough, we will all eventually be begging for vim while writing code with

ed.Personally I use helix with an LSP, because it helps speed up development quite a bit. I even have a local LLM for writing repetitive boilerplate bullshit. But I also understand that those are ultimately just tools that speed the process up, they do not fundamentally change what I’m doing.

If this continues people will struggle to do any meaningful work on their personal computes

Excel users devestated.

We’re running straight into a future where consumers’ only option for computers are a cloud solution like MS 365

Pushing constantly towards a subscription economy.

That “economy” is already falling apart. Subscriptions are down, services on “the cloud” are becoming less reliable, piracy is way up again, and major nations and companies are moving to alternatives.

Hell, DDR3 is making a comeback. All that is needed is one manufacturer to start making 15 year old tech again and bam, the house of cards falls.

If you want to do work with the GPU you’re still buying NVIDIA. Particularly 3D animation, video/film editing, and creative tools. Even FOSS tools like GIMP and Krita prefer NVIDIA for GPU accelerated functions.

Would this mean AMD finally gets the supply demand it Reserves?

Unfortunately AMD is affected by RAM shortage too.

Amd is arguably more affected. Intels CPUs have memory built into it, and intel bought about a years worth of memory.

LOL fat fucking chance

Despite them being perfectly worthy competitors, gamers will literally never buy AMD.

I did :( I’m very happy with it too.

Same. I only buy AMD.

I buy the card with the highest benchmark scores that I can afford. It’s not a political green vs red choice.

They didn’t have a high end laptop option when I was shopping. If they want my money I expect an 80 series equivalent in my laptop. If not, I’ll probably just end up with a 7080 or 7090 if NVIDIA still does it then.

I’ll see statements like this thrown around, meanwhile the PS5, Xbox series X and even the upcoming steam machine are all AMD hardware.

AMD embedded hardware is quite a bit different than dedicated gpu’s. They never break 20% in the steam survey for any kind of gpu, they were trending closer to 10-15% for the longest time.

More and more gamers are seeing Linux as the OS of choice so hopfully that will mean more interest in AMD as well.

I know radeons don’t really have the performance crown, but as a life long Nvidia GPU and Linux user, the PITA drivers are not a problem when you use an AMD radeon card.

They’re AI only now.

So they can sell them for even more thanks to scarcity I’m guessing.