Text to avoid paywall

The Wikimedia Foundation, the nonprofit organization which hosts and develops Wikipedia, has paused an experiment that showed users AI-generated summaries at the top of articles after an overwhelmingly negative reaction from the Wikipedia editors community.

“Just because Google has rolled out its AI summaries doesn’t mean we need to one-up them, I sincerely beg you not to test this, on mobile or anywhere else,” one editor said in response to Wikimedia Foundation’s announcement that it will launch a two-week trial of the summaries on the mobile version of Wikipedia. “This would do immediate and irreversible harm to our readers and to our reputation as a decently trustworthy and serious source. Wikipedia has in some ways become a byword for sober boringness, which is excellent. Let’s not insult our readers’ intelligence and join the stampede to roll out flashy AI summaries. Which is what these are, although here the word ‘machine-generated’ is used instead.”

Two other editors simply commented, “Yuck.”

For years, Wikipedia has been one of the most valuable repositories of information in the world, and a laudable model for community-based, democratic internet platform governance. Its importance has only grown in the last couple of years during the generative AI boom as it’s one of the only internet platforms that has not been significantly degraded by the flood of AI-generated slop and misinformation. As opposed to Google, which since embracing generative AI has instructed its users to eat glue, Wikipedia’s community has kept its articles relatively high quality. As I recently reported last year, editors are actively working to filter out bad, AI-generated content from Wikipedia.

A page detailing the the AI-generated summaries project, called “Simple Article Summaries,” explains that it was proposed after a discussion at Wikimedia’s 2024 conference, Wikimania, where “Wikimedians discussed ways that AI/machine-generated remixing of the already created content can be used to make Wikipedia more accessible and easier to learn from.” Editors who participated in the discussion thought that these summaries could improve the learning experience on Wikipedia, where some article summaries can be quite dense and filled with technical jargon, but that AI features needed to be cleared labeled as such and that users needed an easy to way to flag issues with “machine-generated/remixed content once it was published or generated automatically.”

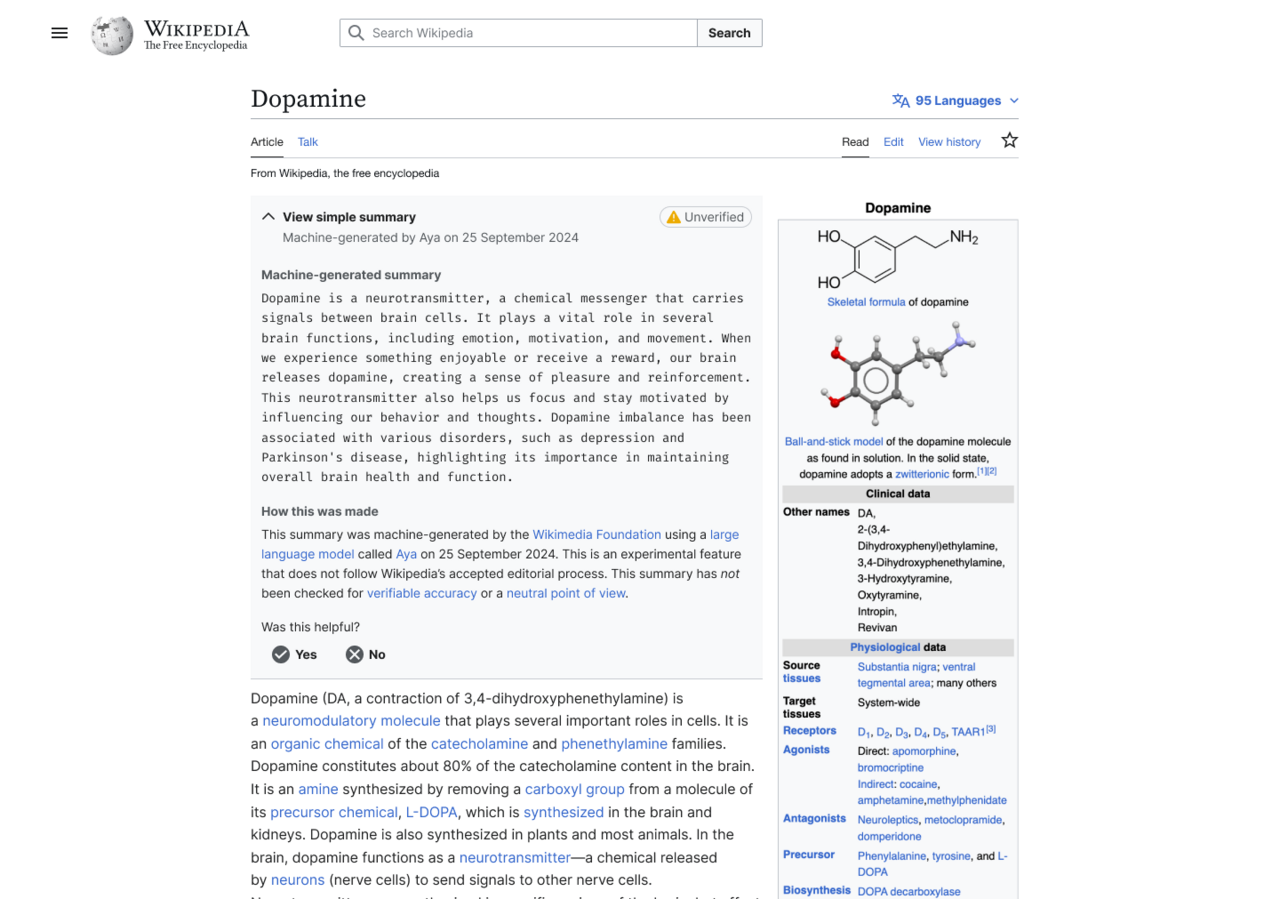

In one experiment where summaries were enabled for users who have the Wikipedia browser extension installed, the generated summary showed up at the top of the article, which users had to click to expand and read. That summary was also flagged with a yellow “unverified” label.

An example of what the AI-generated summary looked like.

Wikimedia announced that it was going to run the generated summaries experiment on June 2, and was immediately met with dozens of replies from editors who said “very bad idea,” “strongest possible oppose,” Absolutely not,” etc.

“Yes, human editors can introduce reliability and NPOV [neutral point-of-view] issues. But as a collective mass, it evens out into a beautiful corpus,” one editor said. “With Simple Article Summaries, you propose giving one singular editor with known reliability and NPOV issues a platform at the very top of any given article, whilst giving zero editorial control to others. It reinforces the idea that Wikipedia cannot be relied on, destroying a decade of policy work. It reinforces the belief that unsourced, charged content can be added, because this platforms it. I don’t think I would feel comfortable contributing to an encyclopedia like this. No other community has mastered collaboration to such a wondrous extent, and this would throw that away.”

A day later, Wikimedia announced that it would pause the launch of the experiment, but indicated that it’s still interested in AI-generated summaries.

“The Wikimedia Foundation has been exploring ways to make Wikipedia and other Wikimedia projects more accessible to readers globally,” a Wikimedia Foundation spokesperson told me in an email. “This two-week, opt-in experiment was focused on making complex Wikipedia articles more accessible to people with different reading levels. For the purposes of this experiment, the summaries were generated by an open-weight Aya model by Cohere. It was meant to gauge interest in a feature like this, and to help us think about the right kind of community moderation systems to ensure humans remain central to deciding what information is shown on Wikipedia.”

“It is common to receive a variety of feedback from volunteers, and we incorporate it in our decisions, and sometimes change course,” the Wikimedia Foundation spokesperson added. “We welcome such thoughtful feedback — this is what continues to make Wikipedia a truly collaborative platform of human knowledge.”

“Reading through the comments, it’s clear we could have done a better job introducing this idea and opening up the conversation here on VPT back in March,” a Wikimedia Foundation project manager said. VPT, or “village pump technical,” is where The Wikimedia Foundation and the community discuss technical aspects of the platform. “As internet usage changes over time, we are trying to discover new ways to help new generations learn from Wikipedia to sustain our movement into the future. In consequence, we need to figure out how we can experiment in safe ways that are appropriate for readers and the Wikimedia community. Looking back, we realize the next step with this message should have been to provide more of that context for you all and to make the space for folks to engage further.”

The project manager also said that “Bringing generative AI into the Wikipedia reading experience is a serious set of decisions, with important implications, and we intend to treat it as such, and that “We do not have any plans for bringing a summary feature to the wikis without editor involvement. An editor moderation workflow is required under any circumstances, both for this idea, as well as any future idea around AI summarized or adapted content.”

Why the hell would we need AI summaries of a wikipedia article? The top of the article is explicitly the summary of the rest of the article.

some article summaries can be quite dense and filled with technical jargon, but that Al features needed to be cleared labeled as such and that users needed an easy to way to flag issues with "machine-generated/remixed content once it was published or generated automatically.

I feel like if they feel that this is an issue generate the summary in the talk page and have the editors refine and approve it before publishing. Alternatively set an expectation that the article summaries are in plain English.

some article summaries can be quite dense

Well yeah, that’s the point of a summary. If I want something in long form, I’ll read the article.

Which is why they’re looking to add a easy to reed short overview.

A page detailing the the AI-generated summaries project, called “Simple Article Summaries,” explains that it was proposed after a discussion at Wikimedia’s 2024 conference, Wikimania, where “Wikimedians discussed ways that AI/machine-generated remixing of the already created content can be used to make Wikipedia more accessible and easier to learn from.” Editors who participated in the discussion thought that these summaries could improve the learning experience on Wikipedia, where some article summaries can be quite dense and filled with technical jargon, but that AI features needed to be cleared labeled as such and that users needed an easy to way to flag issues with “machine-generated/remixed content once it was published or generated automatically.”

The intent was to make more uniform summaries, since some of them can still be inscrutable.

Relying on a tool notorious for making significant errors isn’t the right way to do it, but it’s a real issue being examined.In thermochemistry, an exothermic reaction is a “reaction for which the overall standard enthalpy change ΔH⚬ is negative.”[1][2] Exothermic reactions usually release heat. The term is often confused with exergonic reaction, which IUPAC defines as “… a reaction for which the overall standard Gibbs energy change ΔG⚬ is negative.”[2] A strongly exothermic reaction will usually also be exergonic because ΔH⚬ makes a major contribution to ΔG⚬. Most of the spectacular chemical reactions that are demonstrated in classrooms are exothermic and exergonic. The opposite is an endothermic reaction, which usually takes up heat and is driven by an entropy increase in the system.

This is a perfectly accurate summary, but it’s not entirely clear and has room for improvement.

I’m guessing they were adding new summaries so that they could clearly label them and not remove the existing ones, not out of a desire to add even more summaries.

Wikimedians discussed ways that AI/machine-generated remixing of the already created content can be used to make Wikipedia more accessible and easier to learn from

The entire mistake right there. Look no further. They saw a solution (LLMs) and started hunting for a problem.

Had they done it the right way round there might have been some useful, though less flashy, outcome. I agree many article summaries are badly written. So why not experiment with an AI that flags those articles for review? Or even just organize a community drive to clean up article summaries?

The questions are rhetorical of course. Like every GenAI peddler they don’t have an interest in the problem they purport to solve, they just want to play with or sell you this shiny toy that pretends really convincingly that it is clever.

Fundamentally, I agree with you.

Because the phrase “Wikipedians discussed ways that AI…” Is ambiguous I tracked down the page being referenced. It could mean they gathered with the intent to discuss that topic, or they discussed it as a result of considering the problem.

The page gives me the impression that it’s not quite “we’re gonna use AI, figure it out”, but more that some people put together a presentation on how they felt AI could be used to address a broad problem, and then they workshopped more focused ways to use it towards that broad target.

It would have been better if they had started with an actual concrete problem, brainstormed solutions, and then gone with one that fit, but they were at least starting with a problem domain that they thought it was a applicable to.

Personally, the problems I’ve run into on Wikipedia are largely low traffic topics where the content is too much like someone copied a textbook into the page, or just awkward grammar and confusing sentences.

This article quickly makes it clear that someone didn’t write it in an encyclopedia style from scratch.

I know one study found that 51% of summaries that AI produced for them contained significant errors. So AI-summaries are bad news for anyone who hopes to be well informed. source https://www.bbc.com/news/articles/c0m17d8827ko

“Pause” and not “Stop” is concerning.

Is it just me, or was the addition of AI summaries basically predetermined? The AI panel probably would only be attended by a small portion of editors (introducing selection bias) and it’s unclear how much of the panel was dedicated to simply promoting the concept.

I imagine the backlash comes from a much wider selection of editors.

Why is it so damned hard for coporate to understand most people have no use nor need for ai at all?

It pains me to argue this point, but are you sure there isn’t a legitimate use case just this once? The text says that this was aimed at making Wikipedia more accessible to less advanced readers, like (I assume) people whose first language is not English. Judging by the screenshot they’re also being fully transparent about it. I don’t know if this is actually a good idea but it seems the least objectionable use of generative AI I’ve seen so far.

Considering ai uses llms and more often than not mixes metaphors, it just seems to me that the wkimedia foundation is asking for misinformation to be published unless there are humans to fact check it

One of the biggest changes for a nonprofit like Wikipedia is to find cheap/free labor that administration trusts.

AI “solves” this problem by lowering your standard of quality and dramatically increasing your capacity for throughput.

It is a seductive trade. Especially for a techno-libertarian like Jimmy Wales.

“It is difficult to get a man to understand something, when his salary depends on his not understanding it.”

— Upton Sinclair

Wikipedia management shouldn’t be under that pressure. There’s no profit motive to enshittify or replace human contributions. They’re funded by donations from users, so their top priority should be giving users what they want, not attracting bubble-chasing venture capital.

Good, we don’t need LLMs crowbarred into everything. You don’t need a summary of an encylopedia article, it is already a broad overview of a complex topic.

On the one hand, it’s insulting to expect people to write entries for free only to have AI just summarize the text and have users never actually read those written words.

On the other hand, the future is people copying the url into chat gpt and asking for a summary.

The future is bleak either way.

On the third hand some of us just want to be able to read a fucking article with information instead of a tiktok or ai generated garbage. That’s wikipedia, at least it used to be before this garbage. Hopefully it stays true

The ai garbage at the top doesn’t stop you from doing that.

I get that the simple language option exists, and i definitely think I’m not qualified to really argue what Wikipedia should or should not do. But I wanted to share what my lemmy feed looked like when I clicked into this post and I gotta say, I sorta get it.

I bet they will try again.

Oh absolutely, the

moneyfurnacewikimedia foundation needs to find ways to justify its own existence after all (^:

Yes, throw out the one thing that differentiates you from the unreliable slop.

Well done (and keep fighting)!

when wikipedia starts to publish ai generated content it will no longer be serving its purpose and it won’t need to exist anymore

Too late.

With thresholds calibrated to achieve a 1% false positive rate on pre-GPT-3.5 articles, detectors flag over 5% of newly created English Wikipedia articles as AI-generated, with lower percentages for German, French, and Italian articles. Flagged Wikipedia articles are typically of lower quality and are often self-promotional or partial towards a specific viewpoint on controversial topics.

Human posting of AI-generated content is definitely a problem; but ultimately that’s a moderation problem that can be solved, which is quite different from AI-generated content being put forward by the platform itself. There wasn’t necessarily anything stopping people from doing the same thing pre-GPT, it’s just easier and more prevalent now.

Human posting of AI-generated content is definitely a problem

It isn’t clear whether this content is posted by humans or by AI fueled bot accounts. All they’re sifting for is text with patterns common to AI text generation tools.

There wasn’t necessarily anything stopping people from doing the same thing pre-GPT

The big inhibiting factor was effort. ChatGPT produces long form text far faster than humans and in a form less easy to identify than prior Markov Chains.

The fear is that Wikipedia will be swamped with slop content. Humans won’t be able to keep up with the work of cleaning it out.

deleted by creator

I mean, the LLM thing has a proper field for deployment - it can handle the translation of articles that just don’t exist in your language. But it should be a button a person clicks with their consent, not an article they get by default, not a content they get signed by the Wikipedia itself. Nowadays, it’s done by browsers themselves and their extensions.

And user backlash. Seriously, wtf?

If I wanted an AI summary, I’d put the article into my favourite LLM and ask for one.

I’m sure LLMs can take links sometimes.

And if Wikipedia wanted to include it directly into the site…make it a button, not an insertion.