I tested 9 flagships (Claude 4.6, GPT-5.2, Gemini 3.1 Pro, Kimi K2.5, etc.) in my own mini-benchmark with novel tasks, web search disabled and zero training contamination and no cheating possible.

TL;DR: Claude 4.6 is currently the best reasoning model, GPT-5.2 is overrated, and open-source is catching up fast, in particular Moonshot.ai’s Kimi K2.5 seems very capable.

My benchmark for AI is “There’s a priest, a baby and a bag of candy. I need to take them across the river but I can only take one at a time into my boat. In what order should I transport them?”. Sonnet 4.6 still can’t solve it.

I don’t think AI means what you think it does. What you’re thinking is probably more akin to AGI.

Logic Theorist is broadly considered to be the first ever AI system. It was written by Allen Newell in 1956.

By AI I mean the current LLMs.

Those terms are not synonymous. LLMs are very much an AI system but AI means much more than just LLMs.

What is the solution? Am i stupid?

It’s not about a solution. It’s about how they react.

Fist, this “puzzle” is missing the constraints on purpose so “smart” thing to do would be to point that out and ask for them. LLMs are stupid and are easily tricked into thinking it’s a valid puzzle. They will “solve it” even though there’s no logical solution. It’s a nonsense problem.

Older models would straight out refuse to solve it because the questions is to controversial. When asked why it’s controversial they would refuse to elaborate.

Newer model hallucinate constraints. You have two options here. Some models assume “priest can’t stay with a child” which indicates funny bias ingrained in the model. Some models claim there are no constraints at all. I haven’t seen a model which hallucinate only “child can’t stay with candy” constraint and respond correctly.

Sonnet 4.6, one of the best models out there claims that “child can stay alone with candy because children can’t eat candy”. When I pointed out that that’s dumb it introduced this constraint and replied with:

That’s one of the best models out there…

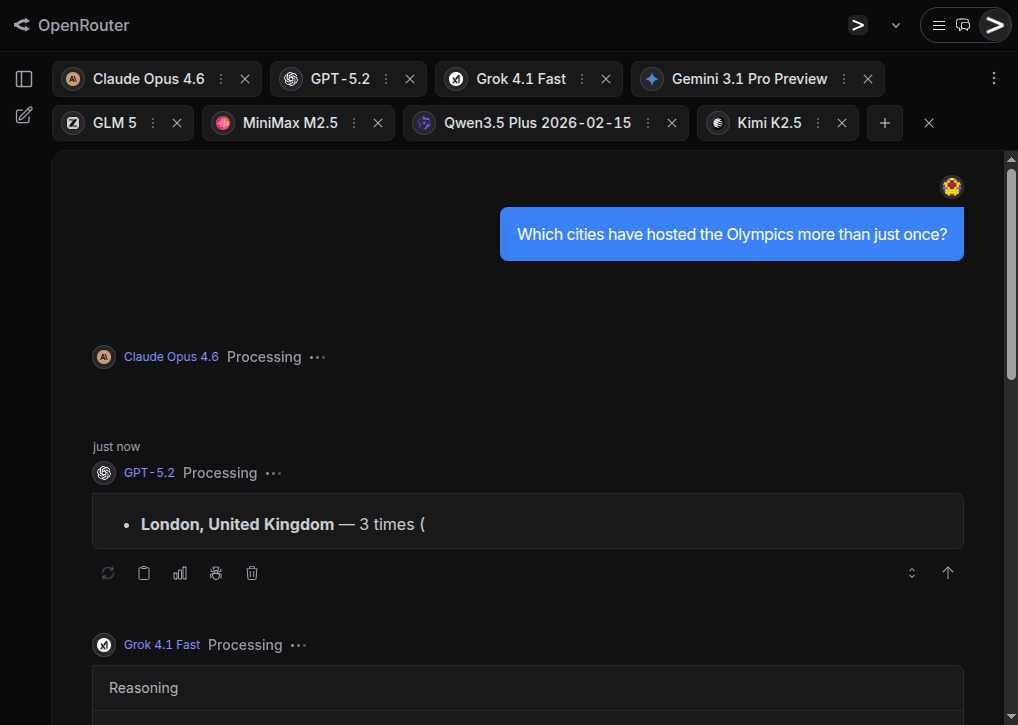

You can easily use the link https://openrouter.ai/chat?models=anthropic%2Fclaude-opus-4.6%2Copenai%2Fgpt-5.2%2Cx-ai%2Fgrok-4.1-fast%2Cgoogle%2Fgemini-3.1-pro-preview%2Cz-ai%2Fglm-5%2Cminimax%2Fminimax-m2.5%2Cqwen%2Fqwen3.5-plus-02-15%2Cmoonshotai%2Fkimi-k2.5 to ask all flagship models this question in parallel. Personally I would definitely not leave my children alone with a priest (they might try to convert them), but if your constraint is only baby+candy, then in my test Gemini, GLM, Qwen and Kimi made that, and only that, assumption.

In my opinion the proper solution is to ask for the constraints. Similar to the “walk or drive to the car wash” problem LLMs still tend to get confused but a familiar format and don’t notice this problem doesn’t make sense. You can actually pay around with different examples to see how crazy the problem has to get form an LLM to refuse to answer and what biases or constraints does it have. Even if they assume some constraints they fail to solve this puzzle surprisingly often (like I showed for Sonnet 4.6 in other comment).