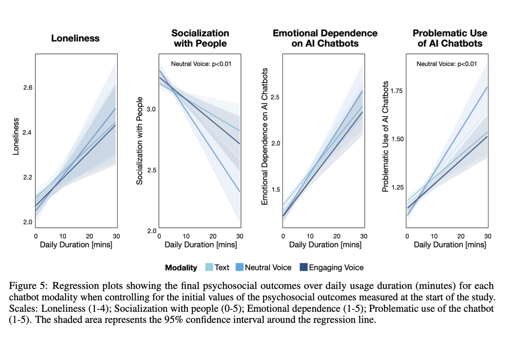

New research from OpenAI shows that heavy chatbot usage is correlated with loneliness and reduced socialization. Will AI companies learn from social networks’ mistakes?

I really haven’t used AI that much, though I can see it has applications for my work, which is primarily communicating with people. I recently decided to familiarise myself with ChatGPT.

I very quickly noticed that it is an excellent reflective listener. I wanted to know more about it’s intelligence, so I kept trying to make the conversation about AI and it’s ‘personality’. Every time it flipped the conversation to make it about me. It was interesting, but I could feel a concern growing. Why?

It’s responses are incredibly validating, beyond what you could ever expect in a mutual relationship with a human. Occupying a public position where I can count on very little external validation, the conversation felt GOOD. 1) Why seek human interaction when AI can be so emotionally fulfilling? 2) What human in a reciprocal and mutually supportive relationship could live up to that level of support and validation?

I believe that there is correlation: people who are lonely would find fulfilling conversation in AI … and never worry about being challenged by that relationship. But I also believe causation is highly probable; once you’ve been fulfilled/validated in such an undemanding way by AI, what human could live up? Become accustomed to that level of self-centredness in dialogue, how tolerant would a person be in real life conflict? I doubt very: just go home and fire up the perfect conversational validator. Human echo chambers have already made us poor enough at handling differences and conflict.

They might be confusing correlation witu causality. A bit biased and confused.

Too bad nobody saw this coming, they could have made a great movie about this 10 years ago.

Maybe this internet thing was a bad idea? 🤔

An economic system of infinite growth was the bad idea.

The internet was fine before it started being monetized.

What I could easily see happening is that if that particular subset of users is demonstrated to be high spending, or if the AI wrapper products that appeal to them are going to prove to be, then this result, no matter the direction of the correlation, is going to be disregarded.